McKinsey's Lilli Looks More Like an API Security Failure Than a Model Jailbreak

McKinsey's Lilli looks, on the public record, like an application-security incident that reached an AI system, not a model jailbreak. CodeWall's March 9, 2026 writeup says its autonomous agent found exposed API documentation, unauthenticated endpoints, a SQL injection condition, and cross-user access. McKinsey told The Register on March 9, 2026 that it fixed the issues within hours and that a third-party forensic investigation found no evidence that client data or client confidential information were accessed by the researcher or any other unauthorized third party.

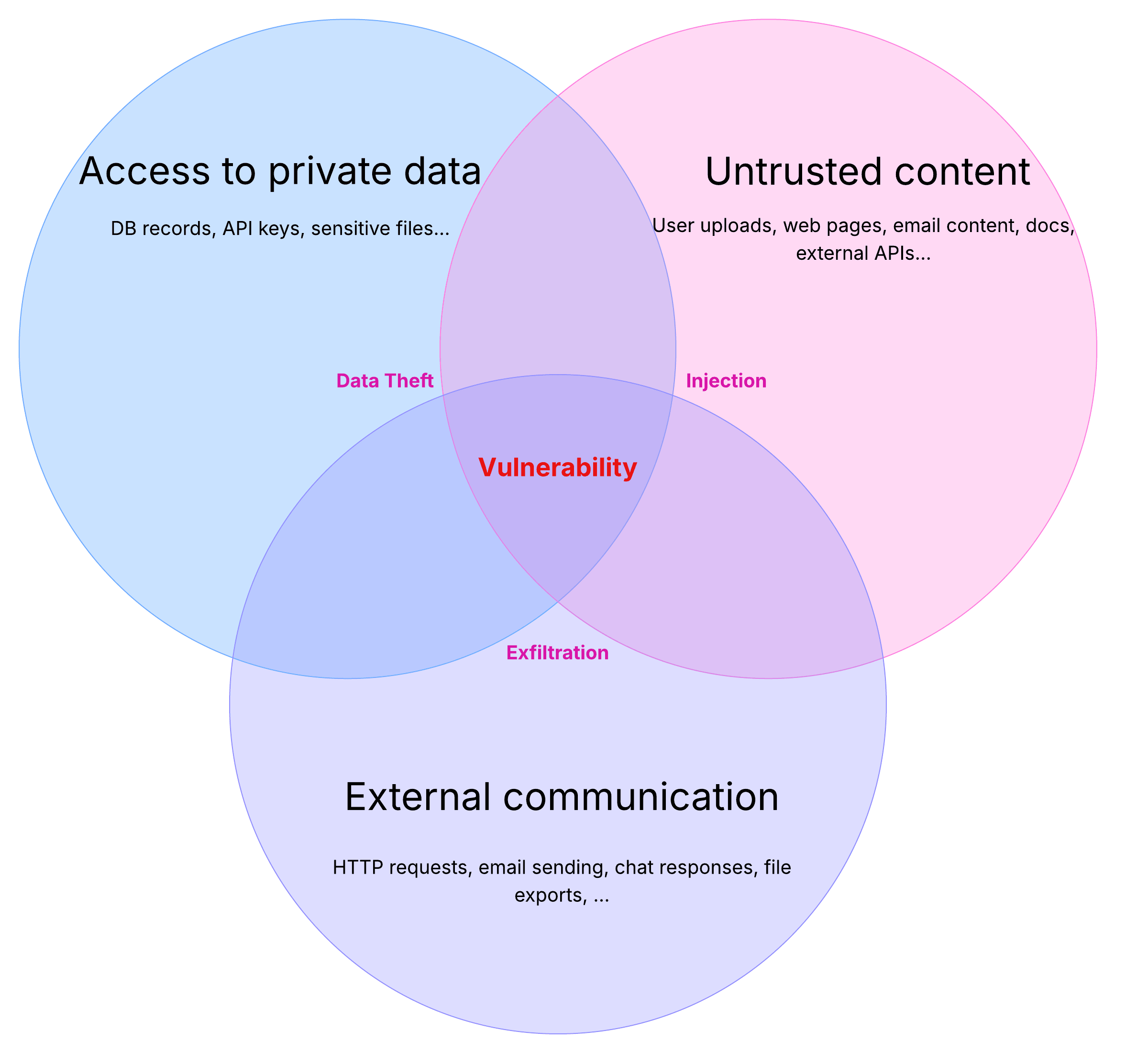

The exact payloads were not published, so the public record does not independently prove every reported row count or every step of exploitation. It does, however, support the shape of the incident. The initial foothold appears to have been a familiar AppSec chain: exposed API surface, missing authentication, unsafe SQL construction, and broken object-level authorization.

The architectural issue is straightforward. If prompts, routing rules, and retrieval settings live as mutable application data, then database write access can change model behavior without a code deploy. Much of what gets called AI security is still software security, data security, and configuration governance.